Hardware in the Loop with Jumpstarter

Testing embedded and edge systems traditionally meant dealing with fragile setups, manual resets, and one-off rigs that don’t scale. Jumpstarter changes that — it makes Hardware-in-the-Loop (HIL) cloud-native.

Jumpstarter is a project that we have been working on from Red Hat OCTO, now RHIVOS, and from Hyundai America Technical Center. It was inspired by Labgrid which is also an awesome project, but we had a different focus on security, removing the requirement for direct ssh connection to exporters.

Website: https://jumpstarter.dev Demo video: Watch on YouTube

What is Jumpstarter?

Jumpstarter is an open-source framework that lets you run, schedule, and manage software + hardware tests, integrating seamlessly with your existing CI/CD systems. The main controller can be installed in Kubernetes or OpenShift, but you can also use local-mode in Github/Gitlab/Jenkins workers without a centralized controller.

You can test your software builds, run firmware updates, perform complex interactions and validate embedded software against real and virtual hardware — all from your pipelines.

🧩 Key Features

- Python based testing: Has a very rich Python framework to help you write very effective tests targeting your devices under test.

- Extensive set of drivers: Broad and extensible driver library, growing every day, will help you interact with your hardware, or virtual hardware.

- Cloud-Native Administration: Deploy and control hardware test nodes using Kubernetes CRDs like Exporters, Clients, and Leases.

- Tekton, GitHub, GitLab, Jenkins support for running your tests.

- Multi-Architecture Support: Works across x86_64 and ARM64, so you can setup exporters on arm devices too (exporters are the devices that provide access to the buses of your device under test).

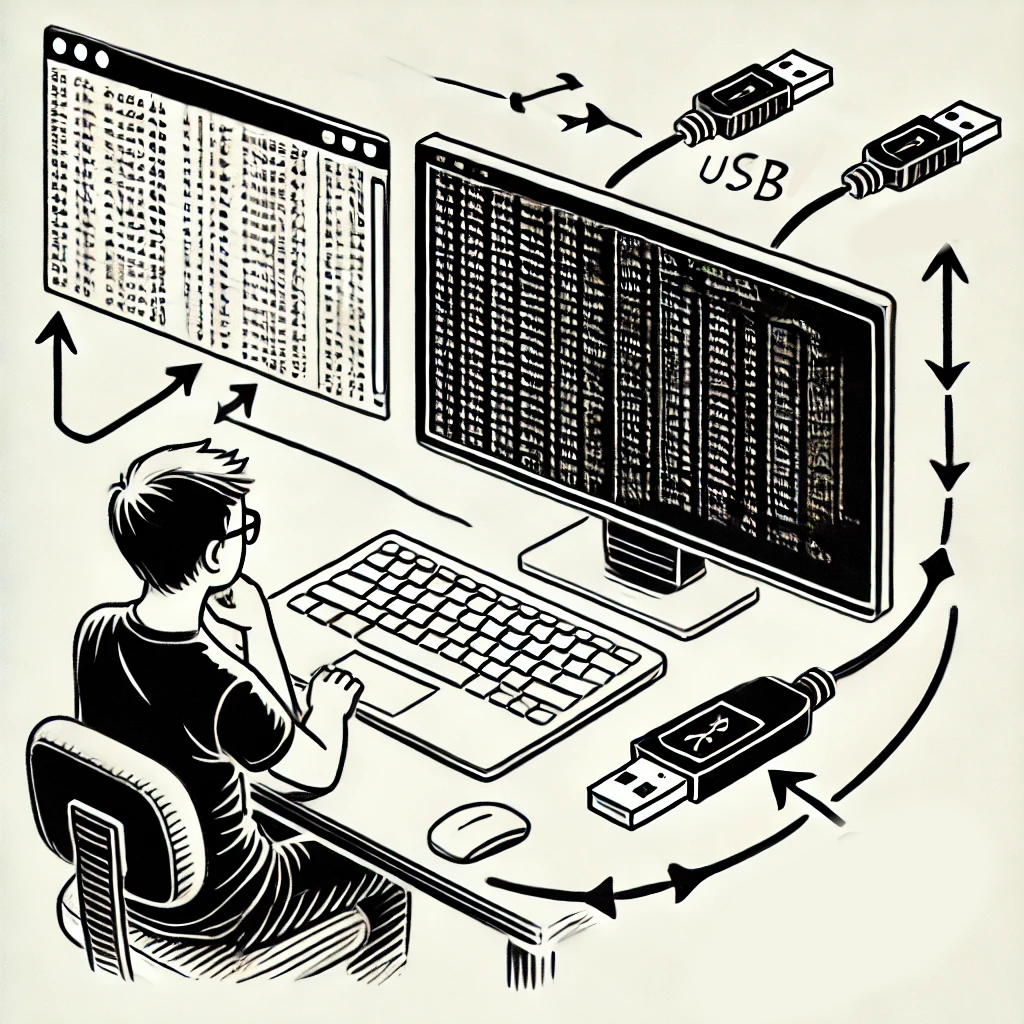

⚙️ Real-World Workflow

In the demo video, you’ll see Jumpstarter running on OpenShift:

- A Tekton pipeline triggers a hardware test build.

- A Lease reserves a physical device.

- The test runs directly on the hardware: programming an MCU, running E2E tests via serial port, webcam capture, etc.

- Results published automatically in Gitlab.

No manual device flashing. No ssh scripts. No spreadsheet tracking who’s using what board.

In addition, developers can lease and access the same hardware resources from their development environment.

🌍 Why It Matters

Jumpstarter bridges the gap between DevOps and embedded testing. It enables continuous delivery for software that runs on physical hardware — something previously confined to lab benches and local setups.

But not only that, it allows your developers to interact and work with the devices in your lab, getting a rich cli + Python interface

Teams can scale their test coverage, catch regressions earlier, and standardize environments across global hardware labs.

🧭 Get Started

Head over to jumpstarter.dev for installation guides, Helm charts, and examples — or check out the GitHub project to start contributing.

If you’re into CI/CD, embedded systems, or edge computing — this project is worth following.